Context

The Rural Adversity Mental Health Program (RAMHP) connects people who need mental health assistance in rural and remote New South Wales (NSW), Australia with appropriate services and resources. Currently, 19 RAMHP coordinators are located across nine rural local health districts.

In 2016, the program underwent a comprehensive review and reorientation to meet new state and federal priorities1. This review found that existing data collection methods, a simple Excel spreadsheet and multiple disconnected data sources (such as administrative records) were inefficient and produced unreliable and limited information about program activities. Poor data reliability meant that RAMHP managers lacked detailed and accurate information about coordinators’ work, making it difficult to monitor the alignment of activities with program plans1. Furthermore, coordinators couldn’t follow and learn from each other’s activities, contributing to reduced program cohesion1. For more information about the program and its review see Maddox et al1.

The present article describes the development of a mobile data collection tool, its implementation challenges and its resultant benefits. It is intended to inform service providers interested in employing app technology, currently underutilised by health services2.

Issues

During the review and reorientation of RAMHP, data collection improvements were identified as a priority to assess progress against a newly revised program logic model (PLM)1. A PLM describes the relationship between a program’s activities, outputs and outcomes3. To aid data entry by the coordinators and facilitate data analysis, monitoring and evaluation by management, a mobile data collection tool was developed using a commercially available form-building app. Apps are increasingly used for the collection of health-related data. For example, they can be used to map place-based determinants of health2, or to monitor mental or physical health interventions4-6. Although there is little literature about the use of apps for collection of activity data by program staff, there is evidence that mobile technology can provide timely, efficient and comprehensive data collection2,7.

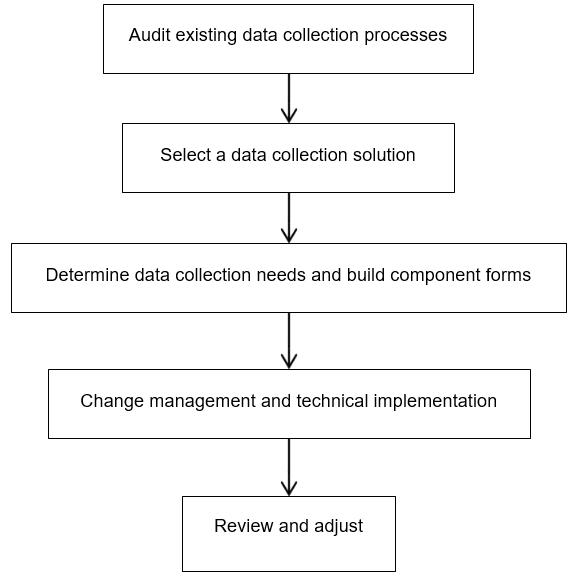

The steps in Figure 1 and described here ensured that the data collection process would meet the program data needs and was acceptable to the coordinators.

Figure 1: Data collection tool development steps.

Figure 1: Data collection tool development steps.

Audit existing data collection processes

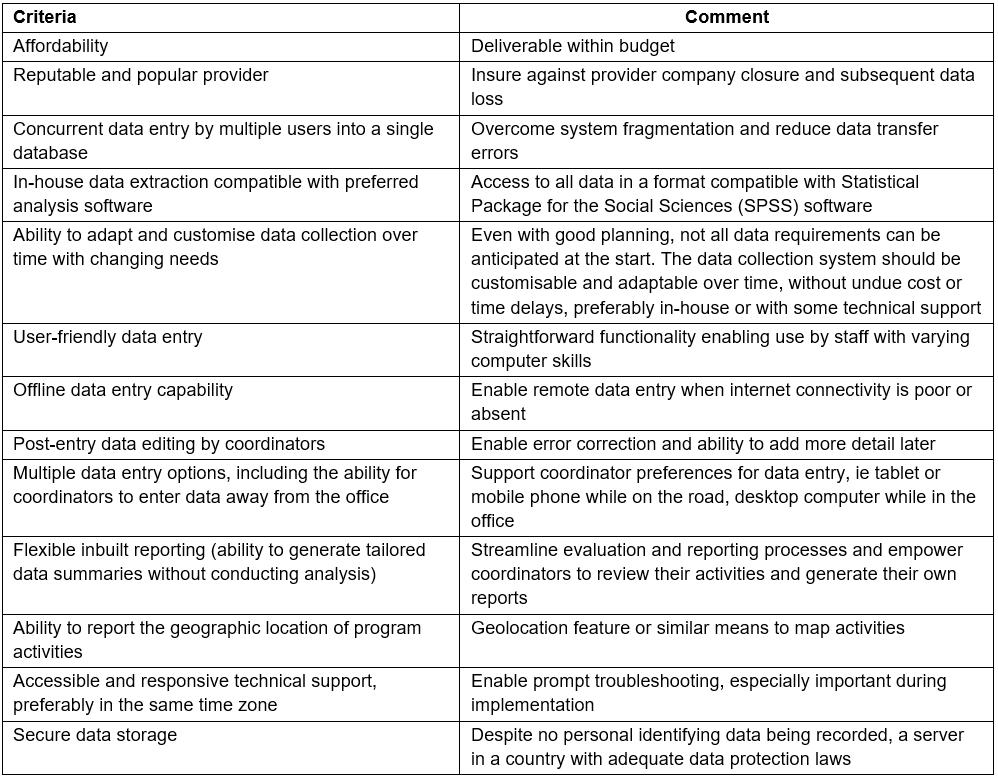

An internal audit revealed data were unreliable and poorly matched to program objectives. Redundant and missing data were part of an unnecessarily burdensome process. Subsequent interviews with RAMHP managers and coordinators sought insights into the advantages and disadvantages of the existing data collection system1. These were used to agree on criteria for a new system (summarised in Table 1).

Coordinators agreed that the Excel spreadsheet data collection tool was completed inconsistently due to poor data field definitions and inadequate training in its use. Furthermore, coordinators made notes while working away from the office and later transcribed this information to Excel, introducing the potential for transcription and omission errors. In addition, there was a low perceived need to record data among coordinators due to poor feedback regarding the supplied data. Without effective feedback, coordinators prioritised their work opportunistically, via requests, rather than strategically. Coordinators reported that their work was recorded inadequately due to a lack of qualitative information to contextualise their activity metrics.

Table 1: Criteria for a new data collection system

Select a data collection solution

An app was chosen because coordinators travel frequently and spend little time on office computers. Coordinators already relied on tablets for email, website and document access. An app offered a means to enter activity data in real time, negating the need to record data twice. Furthermore, it would store all data centrally, removing transcription risk and costs incurred with multiple Excel spreadsheets.

With the aid of a public sector IT adviser, coupled with the criteria compiled during the auditing process (Table 1), five app-building platforms were assessed on their capacity to deliver a suitable app. Two platforms, FastField and Formitize, met the key criteria most closely, and free trials enabled comparison of the functions and usability of each. Formitize was chosen because the Australian provider was able to provide good support to users. While Formitize generally serves non-health users, its features are compatible with the RAMHP data collection needs.

Determine data collection needs and build component forms

The data to be collected were guided by the PLM1 and stakeholder reporting requirements for RAMHP management, the Ministry of Health and local health districts. Five forms were created to capture coordinators’ quantitative and qualitative data: linking a person to a mental health service, delivering training, providing information at a community event, attending a professional meeting or generating mental health promotion via mass media. To protect client privacy8, personal identifying information was not included.

Change management and technical implementation

Using an app, rather than Excel, to collect data was a considerable change for coordinators, thus implementation planning was necessary to ensure acceptance and a smooth transition. Although coordinators took part in the development of the PLM and understood the reasoning behind data collection1, there was apprehension about using unfamiliar technology. To manage this, regular communication about the purpose, benefits and development of the app took place before implementation. As with other quality improvement activities in RAMHP, the roll-out of the app was trialled by three coordinators, selected for their technological skills. These coordinators then demonstrated the app to their peers during a face-to-face team meeting, sharing their positive experience of field-testing the app. Individual support and group training were also provided. In the first months of use, the RAMHP evaluation manager reviewed monthly activity reports to monitor data entry accuracy and provide individualised feedback.

Two main implementation issues occurred. First, soon after deployment the app ‘froze’ and ceased to function for some coordinators. This was caused by tablet-related IT maintenance problems and was overcome by further training and support for coordinators. Second, an important automated reporting feature was not provided by the vendor. Consequently, all analysis and reporting have been managed manually.

Review and adjust

After 3 months use of the app, a review was conducted to assess whether improvements could be made. With coordinators comfortable with the app’s functions, it was possible to increase the number of questions and response options in the forms, thereby improving the data capture. New response options were identified by analysing the open-text (‘other’) responses in the forms. The first 3 months data was recoded to fit the new fields. It was important to optimise data collection early in the funding period to establish a baseline for ongoing comparability; however, a small number of refinements have been implemented more recently.

Lessons learned

All steps taken to develop and implement the app – such as choosing a provider, testing functionality, determining the data collected, deciding how analysis would occur and staff training – required considerable investment in time, resources and strategic planning9. This investment was crucial to ensure that the app provided worthwhile data to minimise subsequent changes. After 3 months, the coordinators were comfortable with the technology, and data collection enabled powerful analysis and reporting. Consideration of the logistics of evaluation and reporting in the program redesign stage assisted with the smooth implementation of the new data collection system.

Improved data collection

There has been a considerable and consistent increase in the program data collected since the introduction of the app. For example, in the 6 months preceding the app, the number of reported people linked to mental health services was 391, compared with 929 in the 6 months after the app was introduced. Coordinator feedback indicated that the app’s accessibility and ease of use, coupled with the monthly performance feedback, increased the motivation of coordinators to enter data, leading to these increases rather than dramatic changes in work practices.

Useful and timely data have increased coordinators’ awareness of the impact of their activities, and facilitated self-management. For example, coordinators receive feedback about the characteristics of training participants (gender, location and occupation), highlighting underrepresented groups to target.

Moreover, since the app aligned with the PLM, co-developed with coordinators, its use has reinforced program objectives consistently and encouraged coordinators to value the data collection process. Further, managers have a more accurate picture of coordinators’ activities, to help determine program strategic priorities.

Improved communication

The specific questions within the app’s forms mean that RAMHP is able to share detailed, timely and accurate information with important partners and stakeholders. The geographic location of activities can be mapped, which is useful for demonstrating reach, such as into remote communities. Furthermore, the demographic data collected makes clear the characteristics of the people linked to mental health services such as age, gender and mental health issues experienced. Qualitative descriptions of encounters may also be recorded and these are used to provide narrative depth to reports and context to statistics. This information allows RAMHP to confidently articulate the program’s value, raise its profile and present high quality evidence to key stakeholders.

Acknowledgements

Thanks to Tessa Caton for comments on the concept.

References

You might also be interested in:

2016 - Paediatric neurological melioidosis: a rehabilitation case report

2012 - Developing dietetic positions in rural areas: what are the key lessons?